In July, I was in Mexico City for Wikimania, the global Wikimedia community’s annual conference. I was there to kickstart the adaptation of Wiki Ed’s Dashboard system for use beyond our programs, and to catch up with the latest developments in Wikimedia technology ecosystem. I’ll give a quick overview of what I was up to, which involves some work to help groups adapt our tools for other projects, some interesting quality assessment tools for articles, and some news about our plagiarism detection infrastructure.

The Wiki Education Foundation’s programs focus on the United States and Canada. However, the tools we’re building could be very useful to the broader Wikimedia community. They could be adapted for education programs in other countries and languages, as well as other outreach projects, such as Art+Feminism.

During the Wikimania Hackathon — two days of developers collaborating on whatever projects catch their fancy — I worked with two Wikimedia Foundation software engineers, Andrew Green and Adam Wight. We took the first steps toward making dashboards available for other projects. By the end of the hackathon, we had a new version up and running at outreachdashboard.wmflabs.org, which some English Wikipedia edit-a-thons and other outreach programs plan to try out soon.

The next steps for the general community version of the dashboard will be to see how it performs for English Wikipedia projects. We’ll fix any problems that turn up, and then move toward full internationalization. The dashboard interface is already being translated at translatewiki.net. For ideal use across Wikipedia languages, though, we need to update the dashboard’s core to track languages and handle data from multiple language versions of Wikipedia at once. (If you’re interested in hacking on it, and you know some Ruby on Rails, get in touch!)

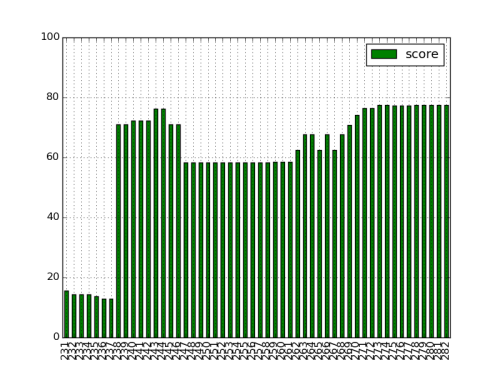

Another, more serendipitous bit of exploration at Wikimania was based on the “revision scoring as a service” project. Wikimedia Foundation researcher Aaron Halfaker and several collaborators have been developing artificial intelligence techniques to evaluate Wikipedia revisions and diffs. Those AI’s make predictions about whether changes will be reverted, and what rating an article would have on the “Wikipedia 1.0” quality scale. I applied the rating predictions to articles in some of Wiki Ed’s courses from recent terms. I converted the predictions into a numerical score, and charted the change in quality from the beginning of the course to the end. We came up with some really interesting results. Here’s the revision scoring model estimates for the quality of “Antitheatrical prejudice”, a student editor’s project from a fall 2014 theater history course:

The chart tells an interesting story. In the first revisions, the student created a short stub — just a single sentence. Then, in one big edit, it was expanded to a full article. The next significant change in the quality estimate is a drop. What happened there? As it turns out, the images in that initial version were deleted, resulting a big hit to estimated quality. In subsequent revisions, the student editor did a lot of copyediting, which didn’t affect the estimate very much. Eventually, the student editor found some appropriately licensed images to illustrate the article (and bumped up the quality estimate to a new high).

This kind of data could help surface major events in the evolution of an article, and to identify edits worth investigating more closely. We shouldn’t think of it in terms of article quality per se, as it doesn’t understand the meaning, or even the grammar, of the text. But as a measure of structural completeness, it seems to do a pretty good job.

Finally, I had a chance to discuss the future of our plagiarism-checking infrastructure with James Heilman and Eran Rosenthal, who have been working on the EranBot copy-and-paste detection system. The next version will involve a queryable database of checked revisions, which could be used not only by EranBot, but by other services such as the Wiki Ed Dashboard. I’ll be tackling that project in the coming months, and we’re hoping to build on the exciting work that Eran has started.

On all these fronts, I’m really excited about the collaboration that’s coming!

Sage Ross

Product Manager, Digital Services

Photo of Wikimania Hackathon by Ralf Roletschek / fahrradmonteur.de [GFDL 1.2], via Wikimedia Commons.